Frankenstein GPUs.

Training LLMs on Mixed Hardware

Guiding Principles

Hackability over Polish

Enterprise tools like DeepSpeed are amazing but bloated. I needed something small that I could break and fix in an afternoon.

Hardware Agnostic

The software shouldn't care if the GPU is Green (Nvidia) or Red (AMD). If it can do math, it should help train the model.

Respect the Bottleneck

In a mixed setup, you only move as fast as your slowest card. The software needs to be smart enough to give the slow card less work.

Project Overview

Insight

Insight

Solution

Layer Splitting: The model gets chopped up. GPU A takes layers 0-15, GPU B takes 16-32.

The Coordinator: One script loads the data and sends tensors over the network to the other machine.

The Profiler: A script that runs a quick speed test on each GPU before training to figure out the perfect split.

How I Built It

The Rabbit Hole

I looked at everything—DeepSpeed, Megatron-LM, TorchGpipe. They were all too rigid. I wanted something that felt like LEGOs, not a pre-built model kit.

Building the Bridge

I designed a simple topology. Machine A does its math, then throws the results over the local network (TCP/IP) to Machine B. Because they don't share memory, they don't need to share drivers.

Making it Smart

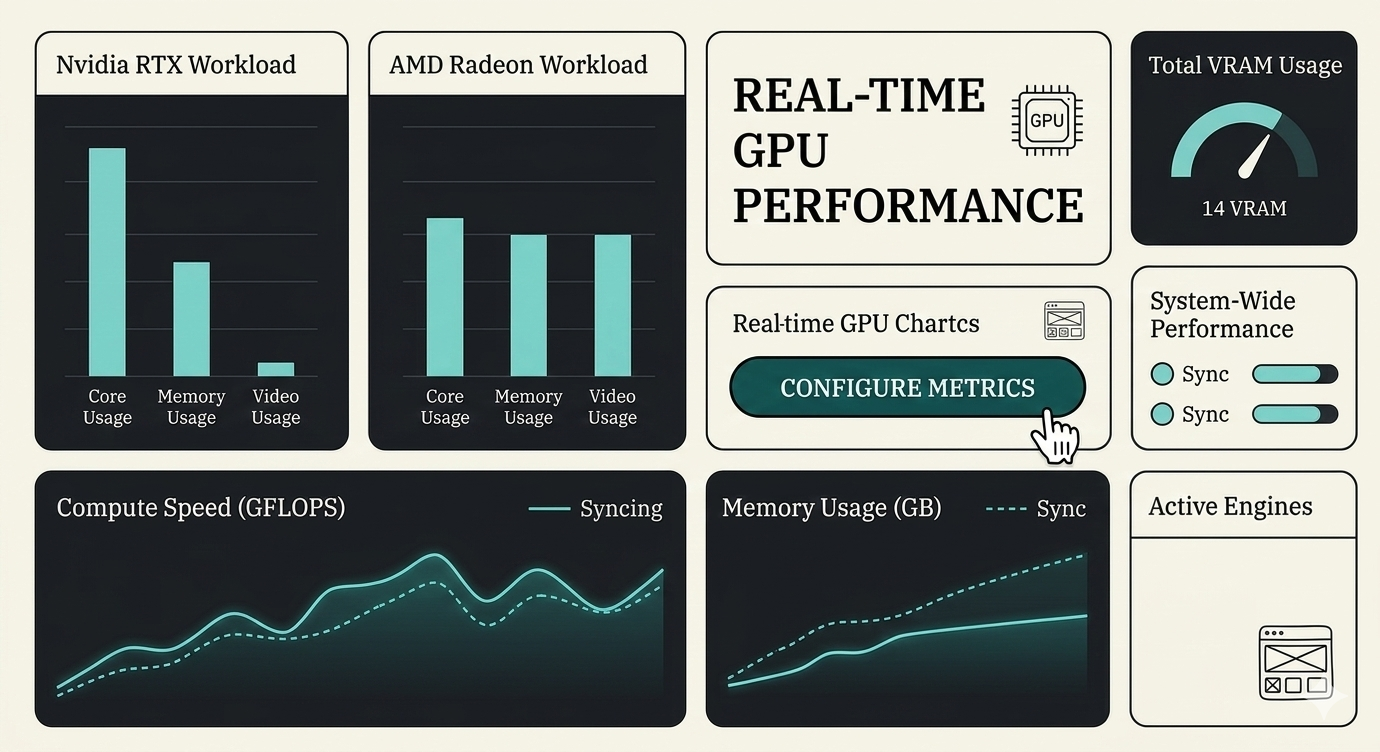

I needed to solve the "slow kid in class" problem. I implemented a profiler (inspired by the Cephalo paper) that measures compute speed and memory usage to auto-balance the load.

The "Will it Blend?" Test

I ran benchmarks on: Single RTX 5090 (289s), AMD Strix Halo alone, and the distributed pipeline together.

The Results

It actually worked.

By splitting the VRAM load, I could train faster than on the single high-end card because I wasn't bottlenecking memory. Single RTX 5090: 289 seconds. Distributed Pipeline: 184 seconds.

Speed isn't everything

In pipeline parallelism, network latency is a real factor. But for LLMs, VRAM is usually the bigger constraint. Trading a little network lag for double the VRAM is a trade I'll take any day.

Open Source is key

All the enterprise solutions ignored this use case because it doesn't make money. But for the homelab community, this is a game changer. **Github:** [github.com/0xrushi/HeteroShard](https://github.com/0xrushi/HeteroShard)