Operationalizing Predictive Analytics.

From Experiment to Enterprise Platform

Engineering Strategy

Engineering Before Algorithms

What looked like a modeling problem was actually a data availability problem. We prioritized fixing the data supply chain before tuning the predictive math.

Integration Is The Product

A forecast is useless if it sits in a database. We designed the architecture backward from the operational dashboard, ensuring insights were consumable in real-time.

Pipelines Over Patches

We moved away from ad-hoc data pulls and built repeatable, monitored pipelines. Reliability was treated as a feature, not an afterthought.

Project Overview

Industry

Enterprise Operations

Team

Data Engineering, Data Science, Operations Stakeholders

Role

Data Engineer, Platform Architect

Context

A large operational organization had developed an early forecasting model to predict critical field events. While the math showed promise, the model was a "science project" stuck in a silo.

My Role & Impact

Insight

Insight

Solution

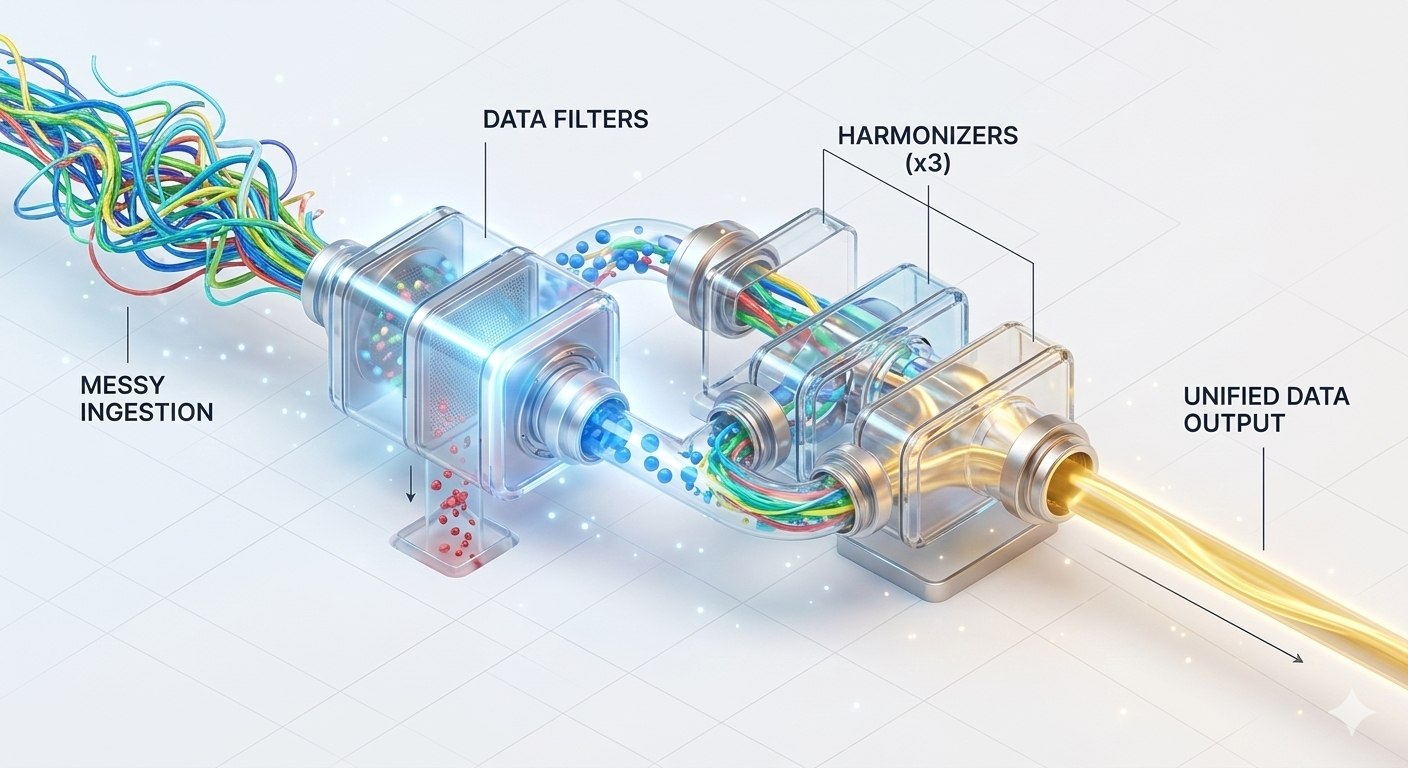

Unified Data Layer: A consolidated pipeline architecture merging internal logs, external signals, and system states.

Scalable Feature Engine: Automated workflows to turn raw, sparse data into reliable predictive features.

Production Integration: A robust framework for embedding model outputs directly into operational platforms.

Process & Execution

Architecture Assessment & Discovery

Reframed the initiative from a modeling exercise to a platform engineering effort. We audited existing workflows and identified the four core gaps: fragmentation, feature engineering, deployment, and integration.

Building the Unified Pipeline

Designed pipelines to consolidate distributed predictive signals.

This involved heavy timestamp alignment, schema harmonization, and event normalization to create a single, trustworthy analytical dataset.

This involved heavy timestamp alignment, schema harmonization, and event normalization to create a single, trustworthy analytical dataset.

Feature Engineering at Scale

Forecast performance depended on signal quality. We engineered infrastructure to handle sparse event indicators and structured datasets to support repeatable feature generation, enabling faster experimentation.

The "Last Mile" Integration

Ensured predictive insights were actually consumed. We defined data interfaces for dashboard integration and built output pipelines that fed directly into the tools operators used daily.

---

---

Key Outcomes

Foundations First

By prioritizing data engineering over model tuning, we created a dataset that actually supported high-granularity forecasting, proving that better pipes lead to better predictions.

From Project to Product

We successfully transformed an isolated proof-of-concept into a scalable capability. The model is no longer a static experiment; it is an embedded part of the operational workflow.

The Engineering Edge

The project demonstrated that in complex operational environments, the barrier to AI isn't the AI itself—it's the messy, fragmented data ecosystem underneath it. We fixed the ecosystem to unlock the value.